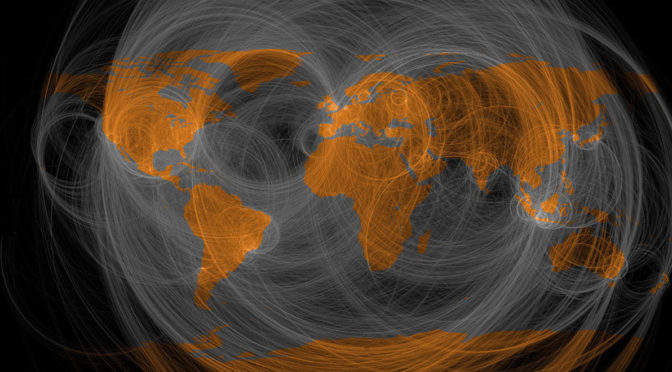

Featured image above: The fourth industrial revolution will bring 75 billion connected devices to the world by 2020 Credit: World Economic Forum / Pierre Abensur

The Fourth Industrial Revolution will arguably become the most disruptive and transformative shift in history, and it’s happening at a rapid pace. Experts from all over the world are discussing how technologies such as artificial intelligence, 3D printing, robotics and biotechnology will have a transformative impact on nearly every industry – from manufacturing and retail to entertainment to healthcare.

But one of the biggest areas of transformation will happen within the social sector. Nonprofits, NGOs and education institutions have a tremendous opportunity to leverage new technologies to scale up their impact and ultimately achieve their critical missions.

The Fourth Industrial Revolution offers huge opportunities to transform social good organizations for the better. Here are five key ways nonprofits, NGOs and education institutions can benefit:

1. Connect to anyone, from anywhere, on any device

The digital era has allowed more people from more places around the globe to become connected. And for the first time, people in remote places have access to other people, resources and aid through the connected devices. There’s a huge opportunity for nonprofits and education institutions to reach more people than ever before and connect them with their cause. Today, nonprofits and education organisations can connect with their donors, volunteers, students and constituents in real-time from anywhere. At schools, for example, a student advisor can send a text message or push notification the minute they see a student falling behind. Nonprofits can instantly reach their community of donors and volunteers to help with urgent matters that may mean the difference between life and death.

2. Scale like never before

Because we’re more connected than ever before, social good organisations can also scale like never before. Historically, a lack of resources and funding have plagued the social sector, but technology can help small organisations make a big impact. Now, it doesn’t matter whether an organisation has 8 or 8,000 employees, the amount of people that can be reached is limitless. Populations that were previously unreachable can now be tapped and connected with particular causes without having to drastically increase overhead costs. Individuals with a passion who may have previously felt helpless will be able to start international movements with minimal resources.

3. Organise communities and engage more deeply

With the arrival of the fourth industrial revolution, organisations can also start to organise and understand these communities better than ever before, resulting in deeper engagement. A nonprofit, for example, can organise its community based on region, specific causes, engagement level and more, and communicate with these groups or individuals in a way that’s highly personalised. According to the recently released Connected Nonprofit Report, 65% of donors would give more money if they felt their nonprofits knew their personal preferences—and 75% of volunteers would give more time. With deeper engagement, these organisations will start to see increases in donations and volunteer time, which directly impacts their mission. For schools and education organisations, they can create a curriculum and course tools around specific learning styles and preferences in order to engage them more deeply and improve their education experience.

4. Predict outcomes

Not only is everyone becoming connected, but everything is becoming connected. In fact, there are expected to be up to 75 billion connected devices in the world by 2020 that will generate trillions of interactions. Advances in artificial intelligence and deep learning are helping make sense of this massive amount of data to deliver actionable insights to businesses and organisations alike. Artificial Intelligence could perhaps be the biggest disrupter of all. For the social sector, that means services can recognise patterns within a community or particular cause and predict future outcomes. For example, education institutions can recognise patterns within a student’s journey, so teachers and advisors can proactively reach out to students who may be in danger of failing or dropping out before it happens. A nonprofit focused on the humanitarian crisis, could identify the specific location and number of refugees coming into different countries, and preemptively send the appropriate level of aid and supplies.

5. Measure impact

Today, 90% of donors think it’s important to understand how their money is impacting the organisations they support, but more than half of donors don’t know how their money is being used, according to the Connected Nonprofit Report. As we look toward the future, the measure of nonprofit success will not be the amount of dollars raised—it will be the impact made on the communities they serve. Historically, impact has not been quantifiable, but with advances in data and analytics, social good organisations can measure how they are performing. This will be crucial to maintain and attract donors and volunteers who help make these organisations possible.

Social good in the fourth industrial revolution

Technology can create, inform and drive global change. The social sector can use it to find and connect with more people who need their services, understand their communities on a deeper level, predict outcomes to make them better prepared and possibly prevent certain situations, and even measure the impact they’re making against their cause.

But it’s up to social good organisations to take advantage of these opportunities—and quickly.

– Rob Acker, CEO, Salesforce

This article on the fourth industrial revolution was first published by the World Economic Forum. Read the original article here.